In the world of system-level programming, we have a saying: Garbage In, Garbage Out. But in the hallowed halls of the Supreme Court of India, a new and more dangerous iteration has emerged: Probability In, Perjury Out.

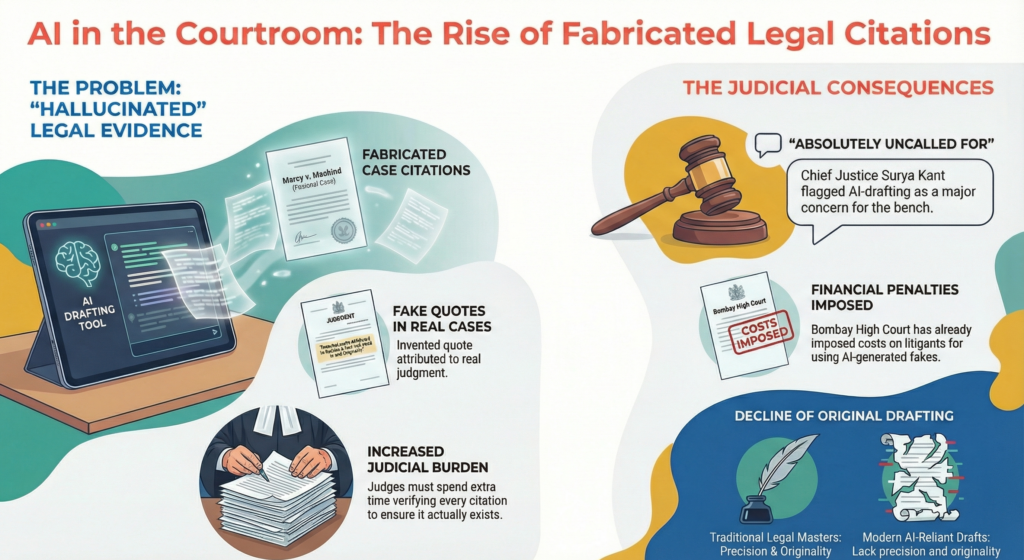

Recently, a bench led by CJI Surya Kant and Justice Nagarathna expressed formal alarm over a trend that sounds like a tech-thriller plot but is a cold reality: lawyers are submitting petitions filled with “hallucinated” case law. From the fictitious Mercy v. Mankind to a series of fake judgments cited before Justice Dipankar Datta, the legal “craft” is facing a critical system failure.

The Bits: The Probabilistic Trap

As someone who has spent 17 years looking at how systems handle data, I see exactly where the wires are crossing. Large Language Models (LLMs) like GPT are not databases; they are inference engines. When a lawyer asks an AI for a citation to support a specific argument, the AI doesn’t search a static library for a case. Instead, it predicts the next most likely token (word) in a sequence based on statistical patterns. If the model’s training data suggests that a certain type of legal argument is usually followed by a citation in a specific format, it will generate one even if it has to invent the parties, the volume number, and the year from thin air.

To a developer, this is a predictive artifact. To a Judge, this is professional misconduct.

The Bylaws: The Erosion of Craftsmanship

The judiciary isn’t just worried about the fake citations; they are mourning the Art of Drafting. Justice Bagchi recently noted a shift from precision to “bulk” where Special Leave Petitions (SLPs) are bloated with AI-generated filler and long, unverified quotations.

This marks a departure from the Gold Standard set by legends like Ashok Kumar Sen. True legal drafting is precise, concise, and original. When a lawyer delegates this to an algorithm, they aren’t just saving time; they are abandoning their duty as an officer of the court.

The Bombay High Court has already signalled that the honeymoon phase for technological error is over, imposing monetary costs on litigants using AI-generated fakes. Under the Advocates Act, the responsibility for a filing is non-delegable. You cannot “outsource” your integrity to a black-box model.

The Sandbox Approach: Leveraging AI Without Losing Your “Judicial Mind”

For young advocates and my fellow law students, AI shouldn’t be a ghostwriter, it should be a research clerk. To use it responsibly, we must adopt a Sandbox Methodology similar to how we test code before deployment:

- Intellectual Grounding: Outline your legal theory manually first. Identify the core statutes whether it’s Article 21 of the Constitution or the specific provisions of the BSA.

- Prompting with Constraints: Never ask AI to “find a case.” Ask it to “summarise this specific text” or “rephrase this paragraph.” By providing the source, you kill the hallucination at the root.

- The Manual Checksum: Every citation must be cross-referenced with a primary database like SCC Online or Indian Kanoon. The Court relies on what is demonstrably true.

- Refining the Craft: Use AI to prune bulk. Ask it to “make this argument more concise.” Use the machine to move toward precision, not away from it.

The Bits & Bylaws Take-Away: The Advocate’s Checklist

Think of this as your Pre-Production Deployment Checklist before any filing hits the Court’s registry:

- Rule of Logic: AI is your Editor, not your Author.

- The Hallucination Checksum: A Mercy v. Mankind error is a 404 for your professional reputation.

- Data Integrity: Under the Bharatiya Sakshya Adhiniyam, the integrity of records is paramount. Don’t pollute your legal record with unverified algorithmic output.

- The Gold Standard Filter: If the output is just bulk, delete it. Aim for craftsmanship.

My Bits & Bylaws Take

The Human-Centric Patch: We are currently in a Techno-Legal Uncanny Valley. We have tools that look like they can think, but they lack the Judicial Mind In my 6th semester of LLB, I’ve realised that whether you are analysing the Watali case for UAPA standards or the Basic Structure Doctrine, the law requires a prima facie truth that an AI cannot verify.

The Industry Verdict: AI is a powerful “B-tree search” for your brain, but it is a terrible substitute for your conscience. If you are a lawyer using AI, you must treat every output like unverified code from an anonymous forum: Test it, sandbox it, and never, ever push it to “Production” (the Court) without a manual code review.

The Art of Drafting isn’t about how many pages you produce; it’s about the weight of the truth behind your words. Let’s keep the bits in the machine and the bylaws in the mind.

The analysis on QICKSTEP BITS & BYLAWS is for informational purposes only. While integrating 17+ years of IT expertise and LLB insights (Sem 6), this does not constitute legal advice. Jurisdiction: Lucknow, UP.